Architected for Databricks

Sigma is an agentic execution layer, AI Applications platform, and spreadsheet UI on top of your Databricks warehouse. Build Sigma Agents and AI Applications without code.

Unified AI Ecosystem

Unify external agents, Sigma Agents, and ones built in Databricks to work faster on Sigma.

Agentic Workflows

Automate agentic action in AI Apps built on Sigma to write updates directly to Databricks.

Architectural Security

Every user, Sigma Agent, and AI Application natively inherits Databricks security policies.

More powerful together.

Build and deploy AI Apps with Unity Catalog-grade security, governance, and scale on Sigma. Easily manage inherited permissions, immutable audit, and maximize your Databricks investment.

- Databricks-native architecture: Sigma live queries your Delta tables directly, with security and governance guaranteed by Unity Catalog.

- One workspace, every user: A secure platform for every user to make the most of natively inherited Mosaic AI and Genie workspaces.

- Integrated enterprise AI: Build AI Applications to write rich, real-time human-in-the-loop insights and action directly to Databricks.

Ready to see what's possible?

Explore how Sigma Agents transform your governed data into automated action.

Architecture at a glance

Execute everything inside the warehouse boundary.

Sigma sits directly on Databricks—generating SQL, orchestrating Mosaic AI functions, and surfacing agent-driven insights through workbooks, AI Apps, and embedded experiences.

When a user asks a question, Sigma determines the optimal path, whether Databricks SQL for structured queries, or Databricks Genie for multi-step reasoning.

Warehouse-native execution

All AI processing, from SQL generation to orchestrating Genie Agents, runs directly on Databricks.

Inherited security

Sigma natively respects Unity Catalog governance so Sigma Agents can take secure action.

Deterministic outputs

Build AI Apps with Sigma Agents and inherited Genie workspaces for governed responses.

End-to-end lineage

Trace every query profile and Mosaic AI action across your lakehouse pipeline.

Under the hood

See the parts architects ask about: compilation, execution paths, governance boundaries, and what you can measure.

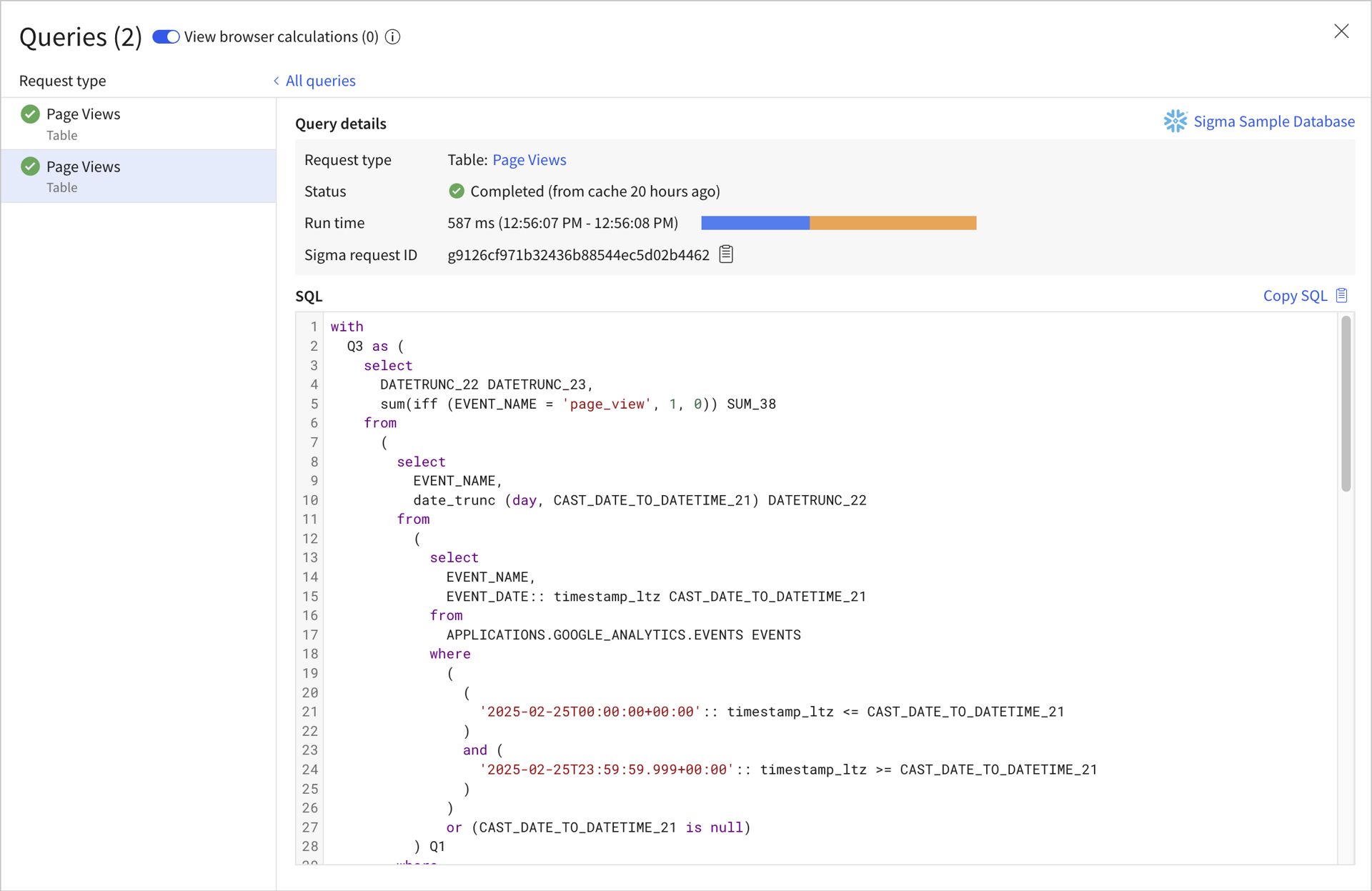

Workbooks generate warehouse-optimized SQL

Sigma translates spreadsheet operations into SQL on the fly. Switch statements become CASE logic, moving averages become window functions, and pivots compile to your warehouse's dialect.

Query History shows the generated SQL for every element, with timing breakdowns and request IDs for warehouse tuning.

You can attribute spend and tune from real usage.

Sigma exposes query behavior including queue time, Sigma runtime, warehouse runtime, and result fetch time, plus admin usage dashboards and audit logs.

Drive access from the warehouse, Sigma, or both

Sigma can run as the user (OAuth) or as a service account, and optionally map users/teams to warehouse roles. Or you can define access rules in Sigma.

You pay for queries. Not curiosity.

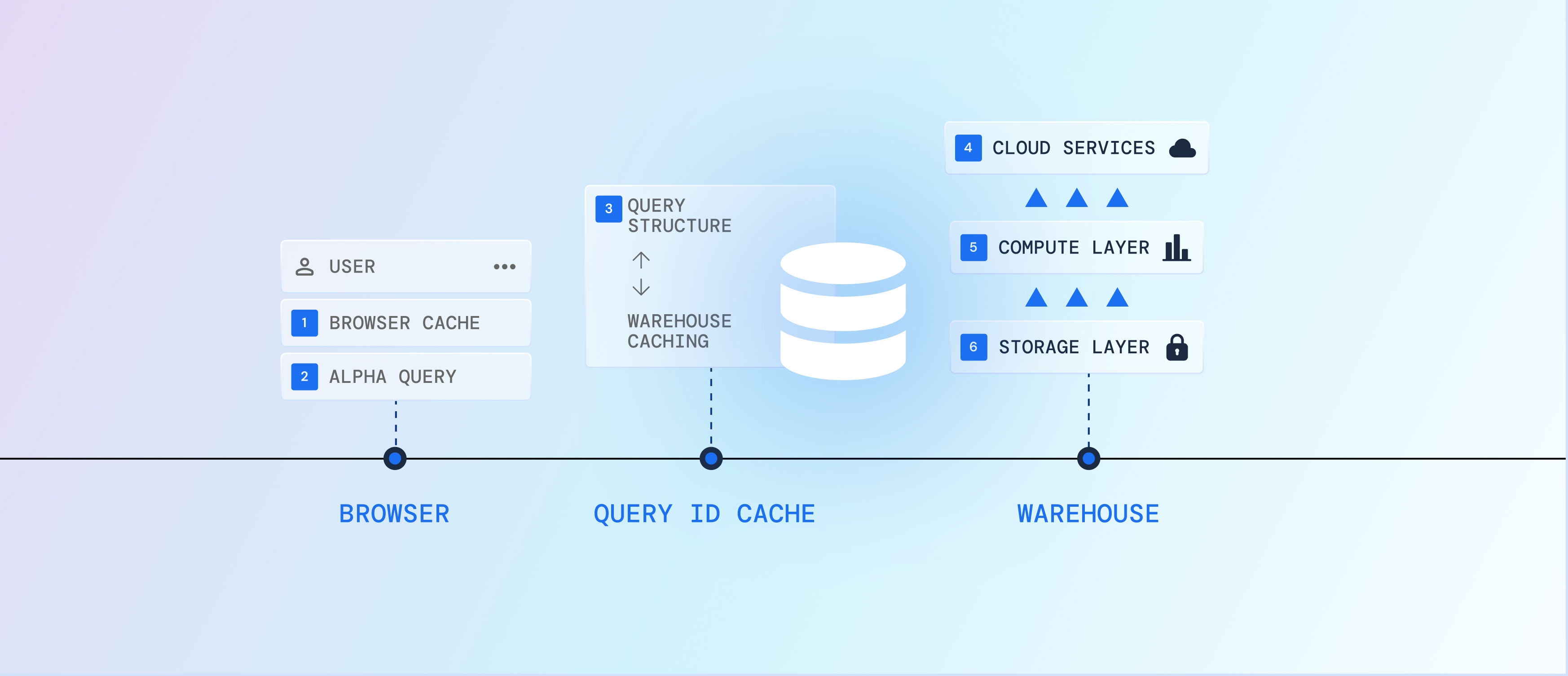

Not every click should wake up your warehouse. Sigma's hybrid query engine evaluates the fastest, lowest-cost execution path by starting in the browser, then escalating through query ID caching, and only then to the warehouse.

- 01

Reuse results already in the user's browser session. If Sigma can satisfy the request from what's already been returned, there's no network roundtrip, and no new warehouse work.

- 02

When the data is in-cache but the shape changed, Sigma recalculates "what changed" directly in the browser—think: new calcs, filters, regrouping—before issuing anything to the warehouse.

- 03

Sigma fingerprints the query structure and keeps a mapping to the warehouse query ID. If it's been run before (and your platform supports result caching), Sigma can fetch the cached result via that ID without storing your query results in Sigma.

- 04

If Sigma has to go back to the warehouse, the warehouse can still return results from its own cache when conditions allow (many platforms keep result caching for up to ~24 hours depending on changes and determinism).

- 05

Only then do you light up compute. But Sigma pushes optimized SQL and merges work when possible to reduce the number of separate hits needed to render a full page of tables, charts, and pivots.

- 06

Your data stays in your warehouse storage. And for repeat-heavy logic, materialize expensive datasets into reusable warehouse tables and refresh on your schedule, so "fresh" doesn't have to mean "expensive."

Enterprise-Grade AI, Agents, Apps, and Analytics

Sigma is built for business teams that need flexibility without sacrificing governance or performance.

Zero-copy query model

Sigma doesn't require you to duplicate warehouse tables into a separate store to get interactivity. Results can be reused via cache paths instead of persisting a separate copy.

Private connectivity (AWS/Azure/GCP)

Support for PrivateLink / Private Service Connect patterns when your security team wants to keep traffic off the public internet.

Auth or service account

Run per-user (OAuth) or via a service account. Choose what fits your governance model and auditing requirements.

Role-aware access control

Dynamically map users/teams to warehouse roles so row/column policies are enforced at query time.

Audit logs for admin events

Track key admin activities (logins, permission changes, connection changes, and more) for operational visibility.

Compliance artifacts in the Trust Center

SOC 2 Type II, ISO/IEC 27001, GDPR/CCPA posture, and other reports live in one place for review.

SOC 2

ISO/IEC 27701

GDPR

CCPA

Fits into the rest of your stack

Sigma connects to your warehouse, and it also plays well with the systems around it whether its catalog, transformation, monitoring, or reverse ETL.

Reuse standardized metrics and keep business logic centralized.

Let users discover governed tables and definitions where they already look.

Keep an eye on pipeline and data quality issues that impact downstream analysis.

Operationalize curated outputs from the warehouse into downstream tools.

Sigma + Databricks FAQ

The questions that usually come up once someone starts mapping Sigma into their Databricks warehouse and governance model.